If Alpha Is Changed From .01 To .05, It Is Easier Or Harder To Make A Type I Error?

Type I & Blazon II Errors | Differences, Examples, Visualizations

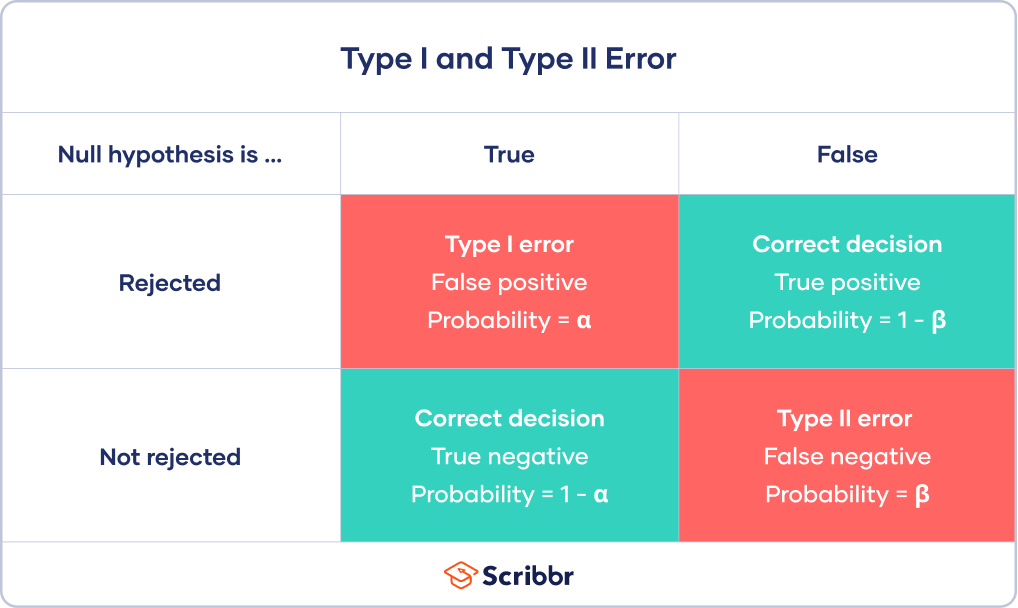

In statistics, a Blazon I error is a false positive conclusion, while a Type II error is a false negative conclusion.

Making a statistical determination always involves uncertainties, so the risks of making these errors are unavoidable in hypothesis testing.

The probability of making a Type I error is the significance level, or alpha (α), while the probability of making a Type II error is beta (β). These risks can be minimized through careful planning in your written report blueprint.

- Blazon I mistake (false positive) : the examination effect says yous take coronavirus, but y'all really don't.

- Type II fault (false negative) : the exam result says you don't accept coronavirus, but you really do.

Fault in statistical decision-making

Using hypothesis testing, you can make decisions about whether your data support or refute your enquiry predictions.

Hypothesis testing starts with the supposition of no difference between groups or no relationship between variables in the population—this is the aught hypothesis. It's always paired with an alternative hypothesis, which is your research prediction of an actual difference between groups or a true relationship between variables.

In this case:

- The null hypothesis (H0) is that the new drug has no effect on symptoms of the disease.

- The alternative hypothesis (H1) is that the drug is effective for alleviating symptoms of the disease.

So, you decide whether the null hypothesis can be rejected based on your data and the results of a statistical exam. Since these decisions are based on probabilities, there is always a hazard of making the wrong conclusion.

- If your results show statistical significance, that means they are very unlikely to occur if the nothing hypothesis is true. In this case, you would turn down your nada hypothesis. But sometimes, this may actually exist a Type I error.

- If your findings do non bear witness statistical significance, they have a high chance of occurring if the null hypothesis is true. Therefore, you lot neglect to refuse your null hypothesis. But sometimes, this may be a Type II error.

A Type 2 error happens when you get false negative results: you conclude that the drug intervention didn't amend symptoms when it actually did. Your study may have missed key indicators of improvements or attributed whatever improvements to other factors instead.

Type I error

A Type I error means rejecting the null hypothesis when it's actually truthful. It means final that results are statistically pregnant when, in reality, they came about purely by chance or because of unrelated factors.

The gamble of committing this mistake is the significance level (alpha or α) yous choose. That's a value that you set at the starting time of your study to assess the statistical probability of obtaining your results (p value).

The significance level is usually set at 0.05 or 5%. This ways that your results only have a 5% risk of occurring, or less, if the null hypothesis is actually true.

If the p value of your test is lower than the significance level, it ways your results are statistically significant and consistent with the alternative hypothesis. If your p value is higher than the significance level, then your results are considered statistically non-significant.

Notwithstanding, the p value means that there is a 3.5% chance of your results occurring if the zip hypothesis is true. Therefore, there is still a risk of making a Type I error.

To reduce the Blazon I mistake probability, y'all tin only set a lower significance level.

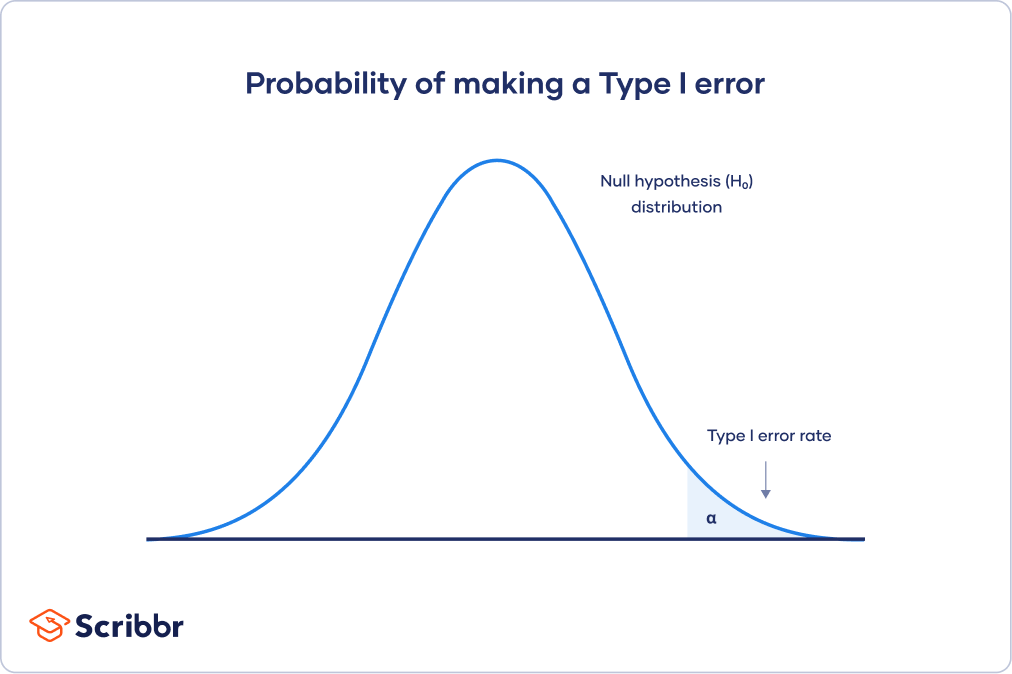

Type I fault charge per unit

The null hypothesis distribution bend below shows the probabilities of obtaining all possible results if the study were repeated with new samples and the null hypothesis were truthful in the population.

At the tail end, the shaded area represents alpha. It's also chosen a disquisitional region in statistics.

If your results fall in the critical region of this bend, they are considered statistically meaning and the naught hypothesis is rejected. However, this is a imitation positive conclusion, considering the null hypothesis is really true in this instance!

What tin proofreading practise for your newspaper?

Scribbr editors non merely correct grammar and spelling mistakes, simply too strengthen your writing by making certain your paper is free of vague language, redundant words and awkward phrasing.

Run across editing example

Type II error

A Type II fault means not rejecting the null hypothesis when it'due south really false. This is not quite the same as "accepting" the nil hypothesis, considering hypothesis testing tin can only tell yous whether to pass up the null hypothesis.

Instead, a Type 2 mistake means failing to conclude there was an result when there actually was. In reality, your study may not have had enough statistical power to detect an issue of a sure size.

Power is the extent to which a test can correctly detect a real effect when there is ane. A power level of 80% or higher is usually considered acceptable.

The risk of a Type II error is inversely related to the statistical ability of a study. The college the statistical power, the lower the probability of making a Type II error.

Still, a Type 2 may occur if an effect that'southward smaller than this size. A smaller effect size is unlikely to be detected in your written report due to inadequate statistical ability.

Statistical power is determined by:

- Size of the upshot: Larger effects are more easily detected.

- Measurement error: Systematic and random errors in recorded data reduce power.

- Sample size: Larger samples reduce sampling error and increment power.

- Significance level: Increasing the significance level increases ability.

To (indirectly) reduce the risk of a Blazon II fault, y'all can increase the sample size or the significance level.

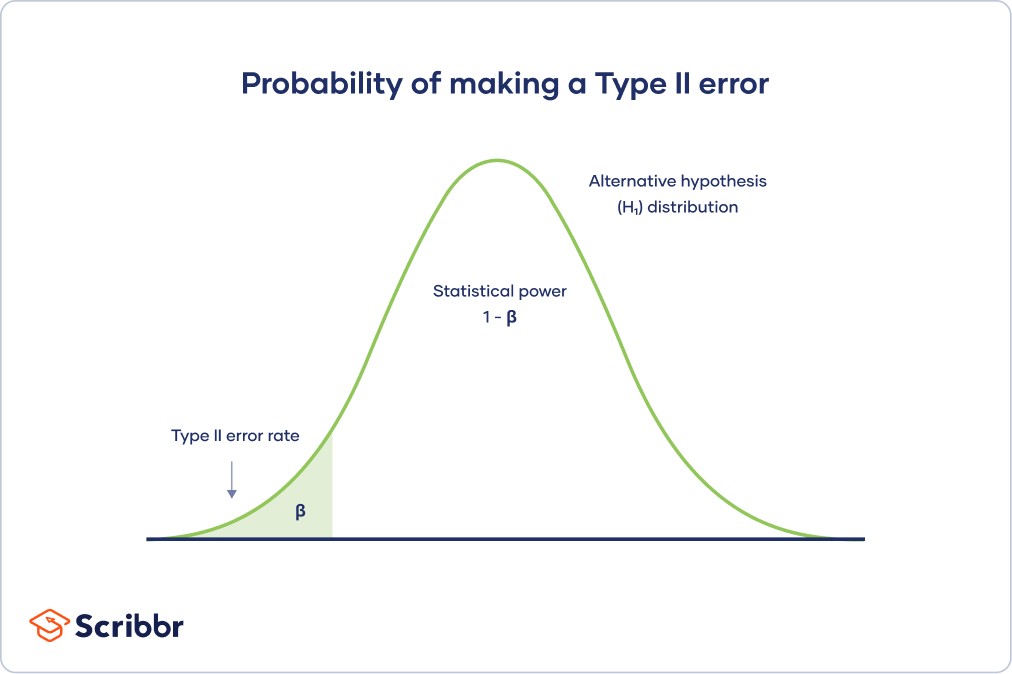

Type II error rate

The culling hypothesis distribution curve beneath shows the probabilities of obtaining all possible results if the study were repeated with new samples and the alternative hypothesis were truthful in the population.

The Type 2 error rate is beta (β), represented by the shaded expanse on the left side. The remaining area nether the curve represents statistical power, which is i – β.

Increasing the statistical power of your test directly decreases the gamble of making a Type 2 error.

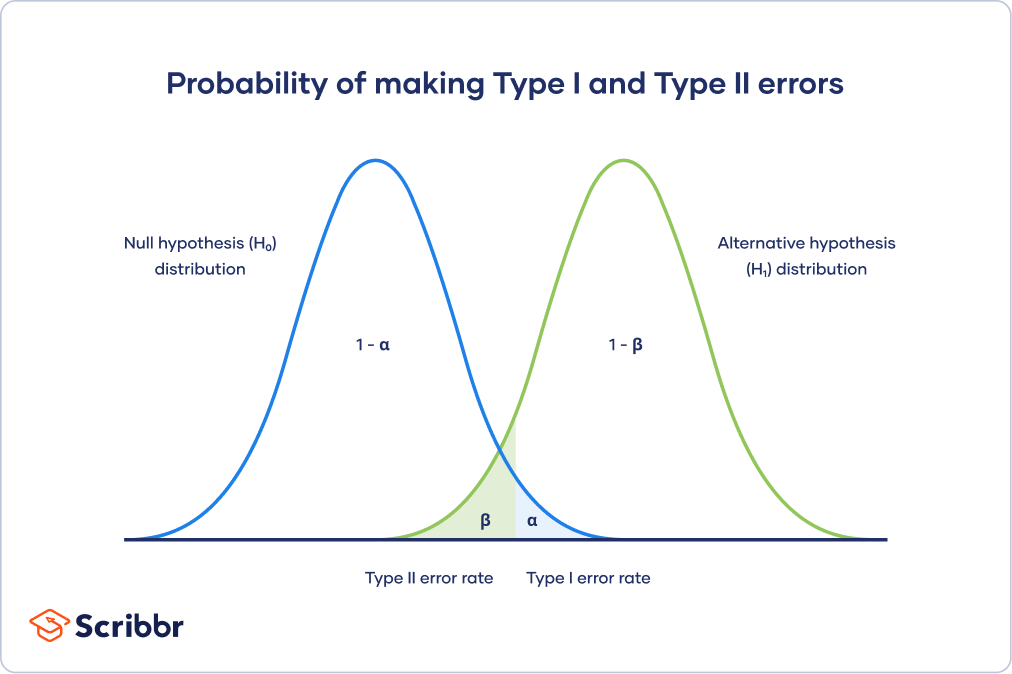

Trade-off betwixt Blazon I and Type Ii errors

The Type I and Type Two fault rates influence each other. That's because the significance level (the Type I error rate) affects statistical power, which is inversely related to the Blazon Ii fault rate.

This means in that location's an of import tradeoff between Type I and Type 2 errors:

- Setting a lower significance level decreases a Type I error take a chance, just increases a Type II error risk.

- Increasing the power of a test decreases a Type II error run a risk, just increases a Type I error take a chance.

This trade-off is visualized in the graph below. It shows ii curves:

- The zilch hypothesis distribution shows all possible results you'd obtain if the null hypothesis is true. The correct decision for any point on this distribution ways not rejecting the zilch hypothesis.

- The culling hypothesis distribution shows all possible results you lot'd obtain if the alternative hypothesis is true. The correct conclusion for any point on this distribution means rejecting the null hypothesis.

Type I and Type Ii errors occur where these two distributions overlap. The blueish shaded area represents blastoff, the Type I error rate, and the light-green shaded area represents beta, the Type 2 mistake rate.

Past setting the Blazon I error rate, yous indirectly influence the size of the Type II error charge per unit equally well.

It's of import to strike a balance betwixt the risks of making Type I and Blazon II errors. Reducing the alpha always comes at the cost of increasing beta, and vice versa.

Is a Type I or Blazon 2 error worse?

For statisticians, a Blazon I error is usually worse. In applied terms, however, either blazon of mistake could exist worse depending on your research context.

A Type I mistake means mistakenly going against the master statistical assumption of a zero hypothesis. This may lead to new policies, practices or treatments that are inadequate or a waste of resource.

In dissimilarity, a Type II error means failing to turn down a aught hypothesis. It may only result in missed opportunities to introduce, but these tin also have important practical consequences.

Oftentimes asked questions most Type I and II errors

- How do you reduce the risk of making a Blazon I error?

-

The chance of making a Type I error is the significance level (or blastoff) that you lot choose. That'south a value that y'all ready at the beginning of your study to assess the statistical probability of obtaining your results (p value).

The significance level is ordinarily prepare at 0.05 or v%. This means that your results simply take a five% chance of occurring, or less, if the cypher hypothesis is actually truthful.

To reduce the Blazon I mistake probability, you can set a lower significance level.

- What is statistical significance?

-

Statistical significance is a term used by researchers to land that information technology is unlikely their observations could have occurred under the nil hypothesis of a statistical test. Significance is usually denoted by a p-value, or probability value.

Statistical significance is capricious – it depends on the threshold, or alpha value, chosen by the researcher. The about mutual threshold is p < 0.05, which ways that the data is likely to occur less than five% of the time under the null hypothesis.

When the p-value falls below the chosen alpha value, and so nosotros say the outcome of the examination is statistically pregnant.

- What is statistical power?

-

In statistics, power refers to the likelihood of a hypothesis exam detecting a true effect if there is one. A statistically powerful test is more than likely to reject a false negative (a Blazon Two error).

If y'all don't ensure enough power in your study, you may not be able to notice a statistically significant result fifty-fifty when it has practical significance. Your study might non have the ability to answer your research question.

Is this article helpful?

Y'all accept already voted. Thanks :-) Your vote is saved :-) Processing your vote...

If Alpha Is Changed From .01 To .05, It Is Easier Or Harder To Make A Type I Error?,

Source: https://www.scribbr.com/statistics/type-i-and-type-ii-errors/

Posted by: ballardloffinds.blogspot.com

0 Response to "If Alpha Is Changed From .01 To .05, It Is Easier Or Harder To Make A Type I Error?"

Post a Comment